Howdy! I’m a Ph.D. candidate in Statistics at Boston University, co-advised by Prof. Debarghya Mukherjee and Prof. Luis Carvalho, and I also collaborate with Prof. Nabarun Deb. Before BU, I earned my M.A. in Statistics from Columbia University and my B.S. in Mathematics from Shandong University, including a year of joint training at the Academy of Mathematics and Systems Science(AMSS), Chinese Academy of Sciences. My research sits at the intersection of statistics and machine learning, where I develop theoretically grounded transfer-learning and representation-learning methods—spanning optimal transport, graph mining, multimodal learning for structured, heterogeneous data in low-sample, high-dimensional, and non-IID settings.

The question that keeps me up (in a good way):

How can we reuse past knowledge when the world—and the data—won’t sit still?

In statistical learning, this is about transferring geometry or smoothness from a well-understood source distribution to a smaller, noisier target under shift. In reinforcement learning, the source might be prior trajectories, simulators, or related tasks, while the target is the evolving environment, so we need principled rules for what to keep, what to adapt, and what to forget. And yes! LLMs/VLMs make this even more exciting (and tricky): they already contain a lot of cross-domain knowledge, but the real challenge is extracting and specializing it safely for downstream tasks without overfitting, hallucination, or misalignment.

What I build

Theory that supports practice Theory that supports practiceMinimax rates · oracle inequalities · regret bounds · safe-transfer criteria under covariate or structural shift. |

Graph-structured transfer Graph-structured transferAligning and transporting information across graphs and manifolds — robust transfer when correspondence is messy or unknown. |

RL & bandits under drift RL & bandits under driftWarm-started policies with uncertainty-aware adaptation for reliable sequential decision-making in changing environments. |

Transfer for LLMs / VLMs Transfer for LLMs / VLMsControlled adaptation · domain grounding · structure-preserving fine-tuning — so models adapt without getting sloppy. |

Curious about my research? I’ve put together beginner-friendly slide decks on my main research directions: transfer learning, graph learning, optimal transport, and LLMs for time series.

Along my academic journey, I have been deeply fortunate to study and conduct research under the guidance of inspiring scholars, including Prof. Zhanxing Zhu, whose influential work includes Spatio-Temporal Graph Convolutional Networks (STGCN) for traffic forecasting, and Prof. Yongshun Gong. Their perspectives on deep learning, representation learning, and structured spatio-temporal systems have profoundly shaped how I think about evolving, heterogeneous data, and have guided my pursuit of principled transfer learning methods.

Beyond theory and modeling, I am drawn to building AI applications that reflect how I see people and the world. I have always felt that human beings are more than their outward forms, that something of the spirit, memory, and inner life exceeds the body that temporarily carries it. That is why I am especially fascinated by cinema, atmosphere, and emotionally resonant digital experiences ✨

🔥 News

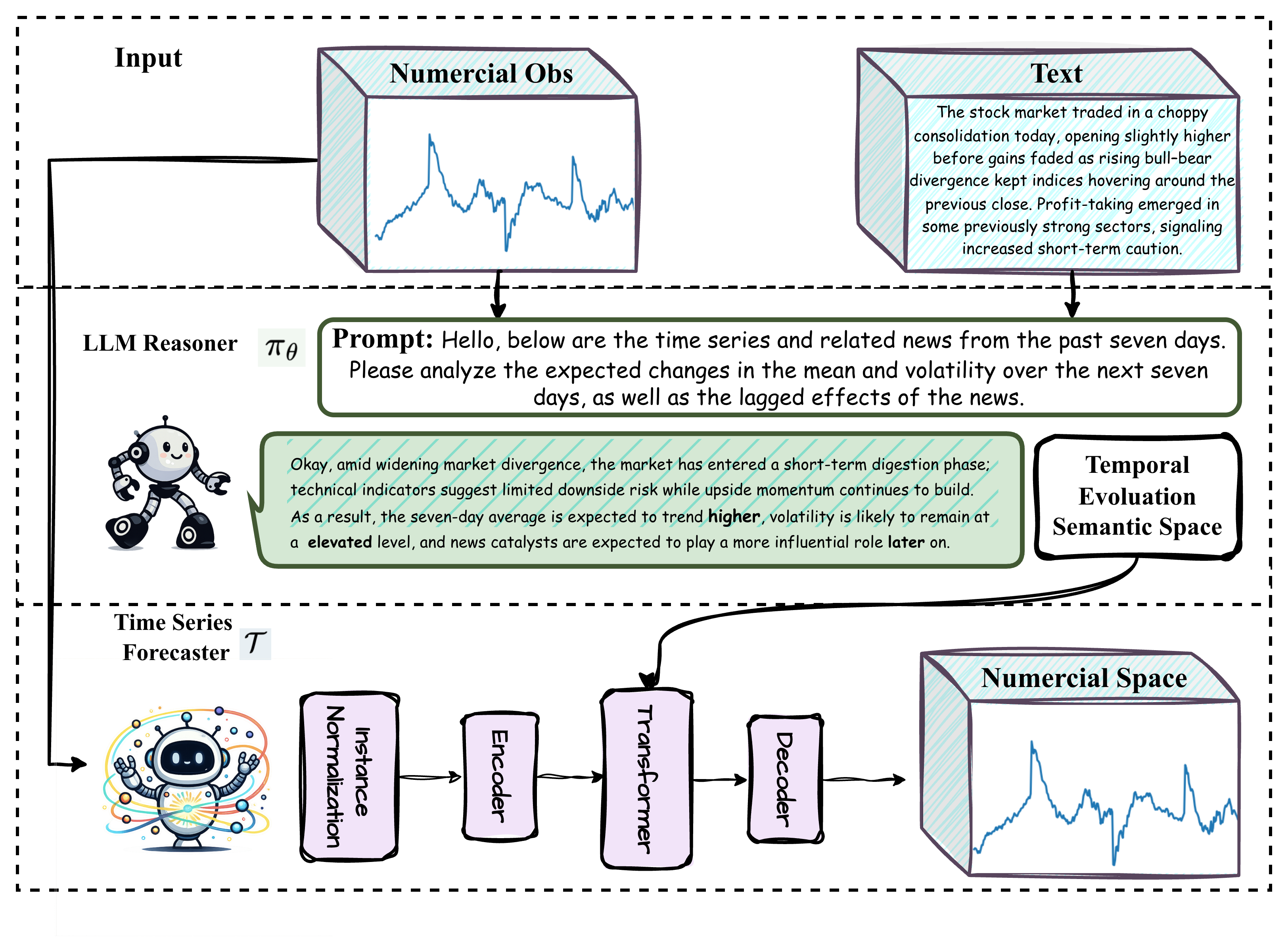

- 2026.05: 🎉 My co-first-author paper “From Text to Forecasts: Bridging Modality Gap with Temporal Evolution Semantic Space” was selected for an Oral presentation (top 0.5%) at (ICML 2026)!

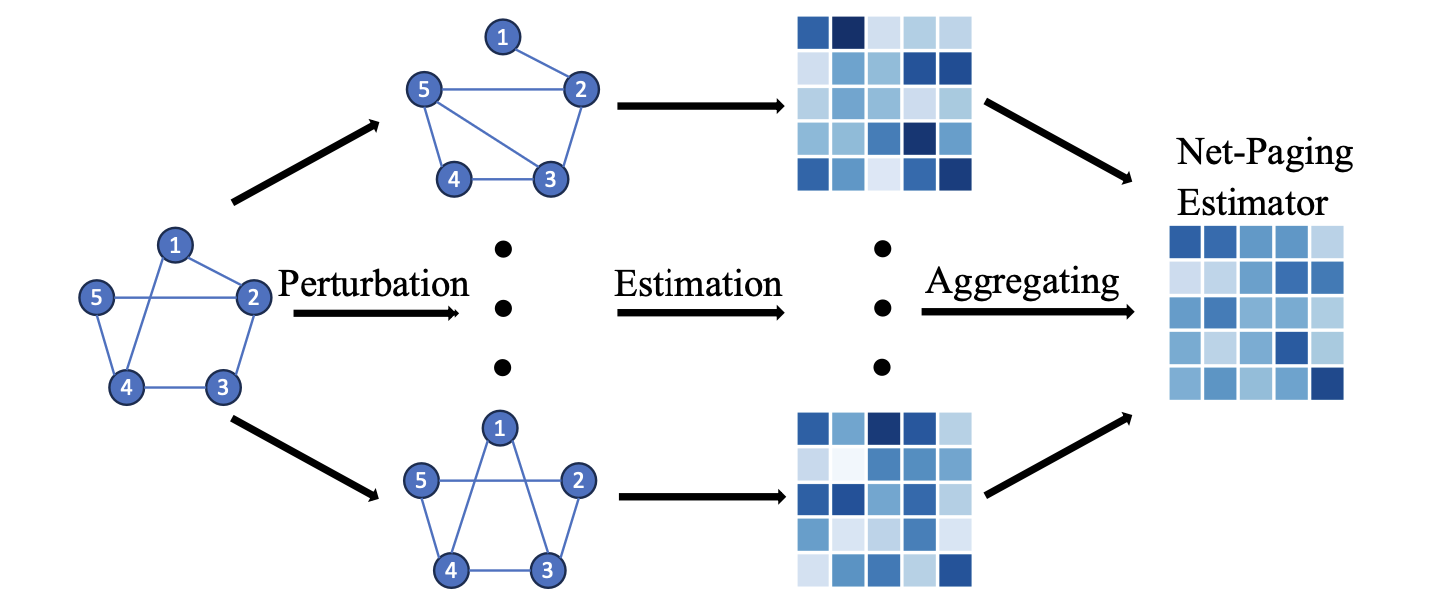

- 2026.05: 🎉 My co-authored paper “Network Perturbation Aggregation for Graphon Estimation” has been accepted by SLADS!

- 2026.04: 🎉 My co-first-author paper “From Text to Forecasts: Bridging Modality Gap with Temporal Evolution Semantic Space” is accepted by (ICML 2026) and selected as a Spotlight!

- 2026.04: 🚀 I’ll be joining Amazon as an Applied Scientist this summer, based in the Bay Area, California!

- 2026.04: 🎉 I am honored to receive the Dean’s Dissertation Fellowship from the Graduate School of Arts and Sciences!

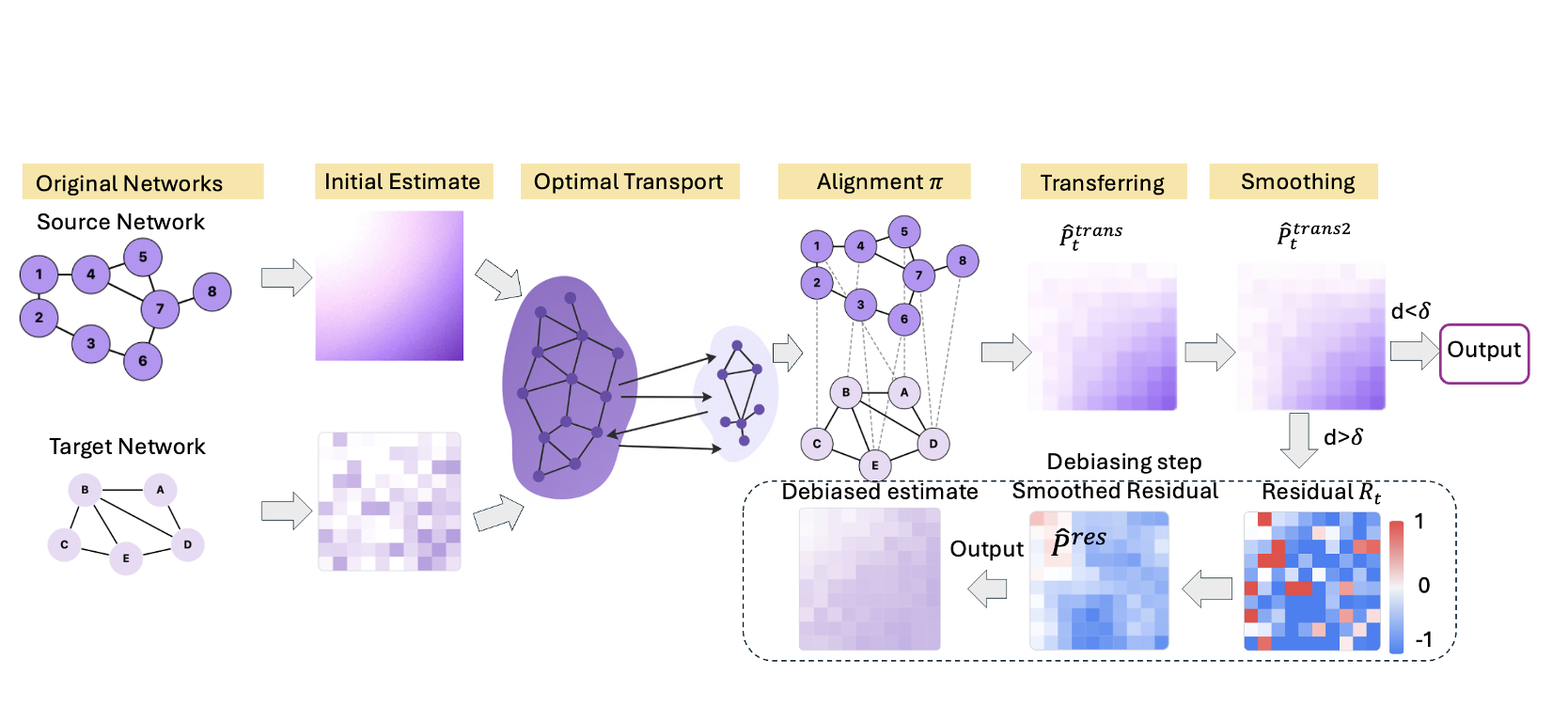

- 2025.09: 🎉 My first-author paper “Transfer Learning on Edge Connecting Probability Estimation Under Graphon Model” is accepted by (NeurIPS 2025)!

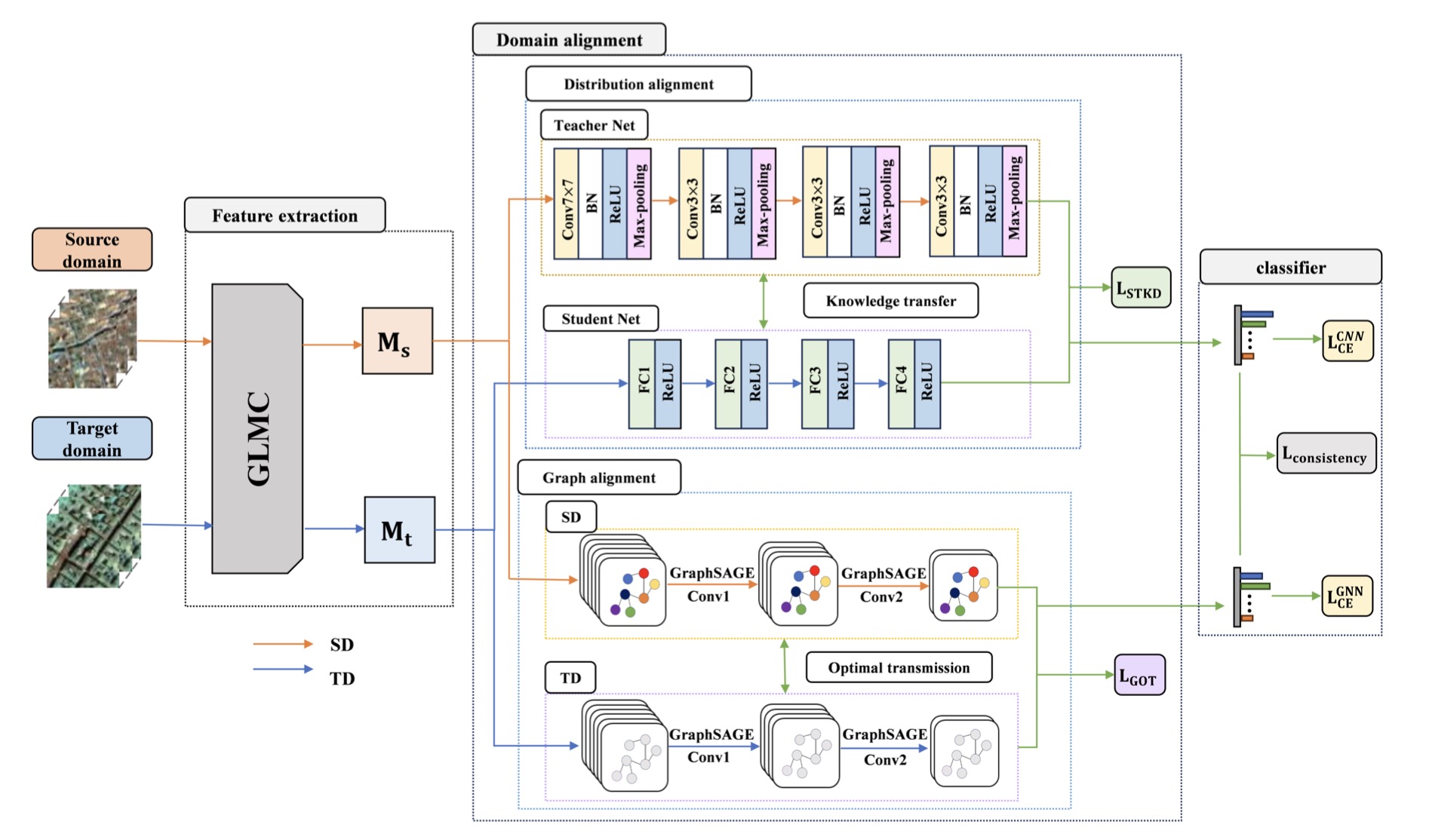

- 2025.08: 🎉 My co-authored paper “Cross-Domain Hyperspectral Image Classification via Mamba-CNN and Knowledge Distillation” is accepted by (IEEE TGRS 2025)!

📝 Publications

Leading Author

|

Transfer Learning on Edge Connecting Probability Estimation Under Graphon Model Transfer Learning on Edge Connecting Probability Estimation Under Graphon Model

|

|

From Text to Forecasts: Bridging Modality Gap with Temporal Evolution Semantic Space From Text to Forecasts: Bridging Modality Gap with Temporal Evolution Semantic Space

|

|

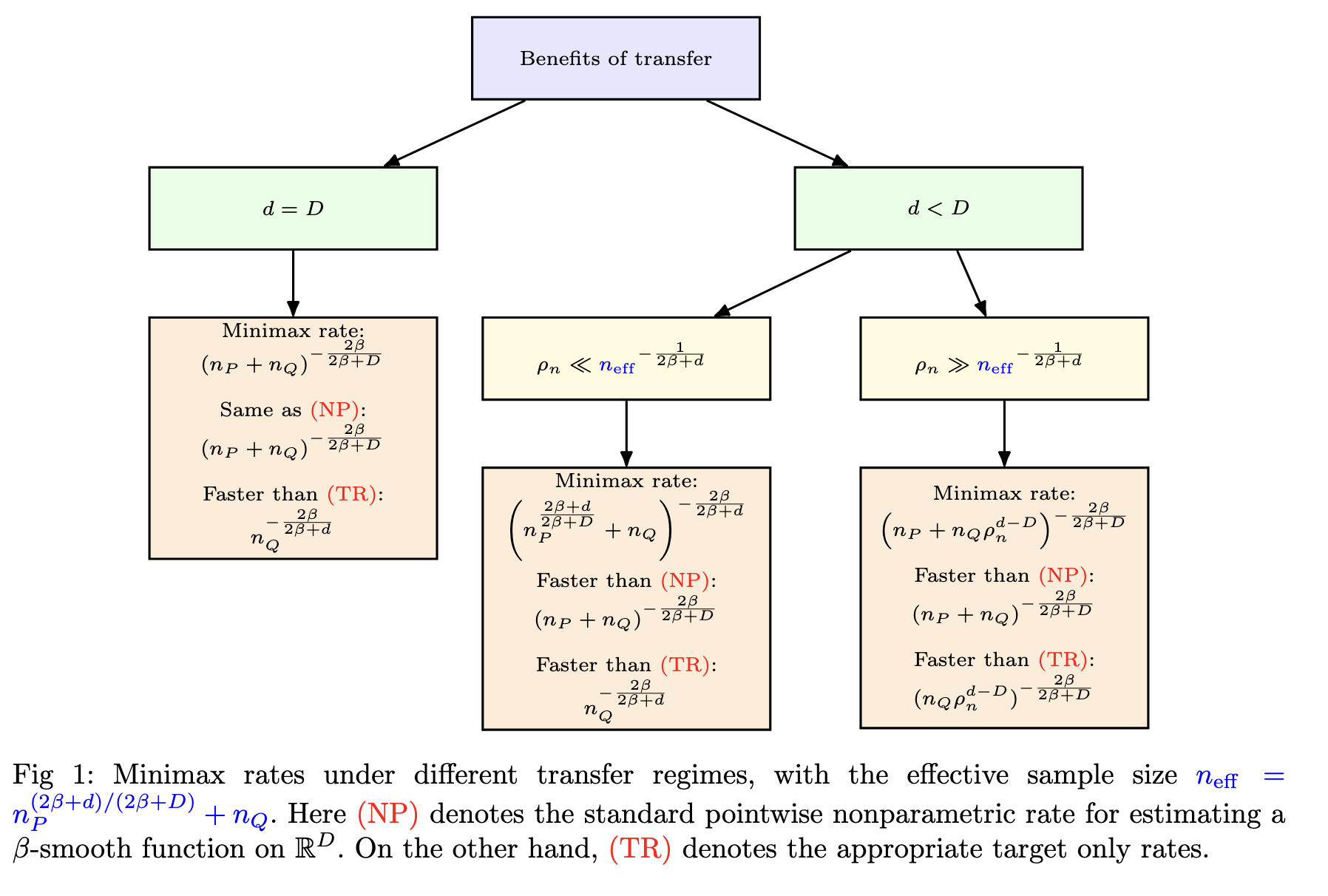

Phase Transition in Nonparametric Minimax Rates for Covariate Shifts on Approximate Manifolds Phase Transition in Nonparametric Minimax Rates for Covariate Shifts on Approximate Manifolds

|

|

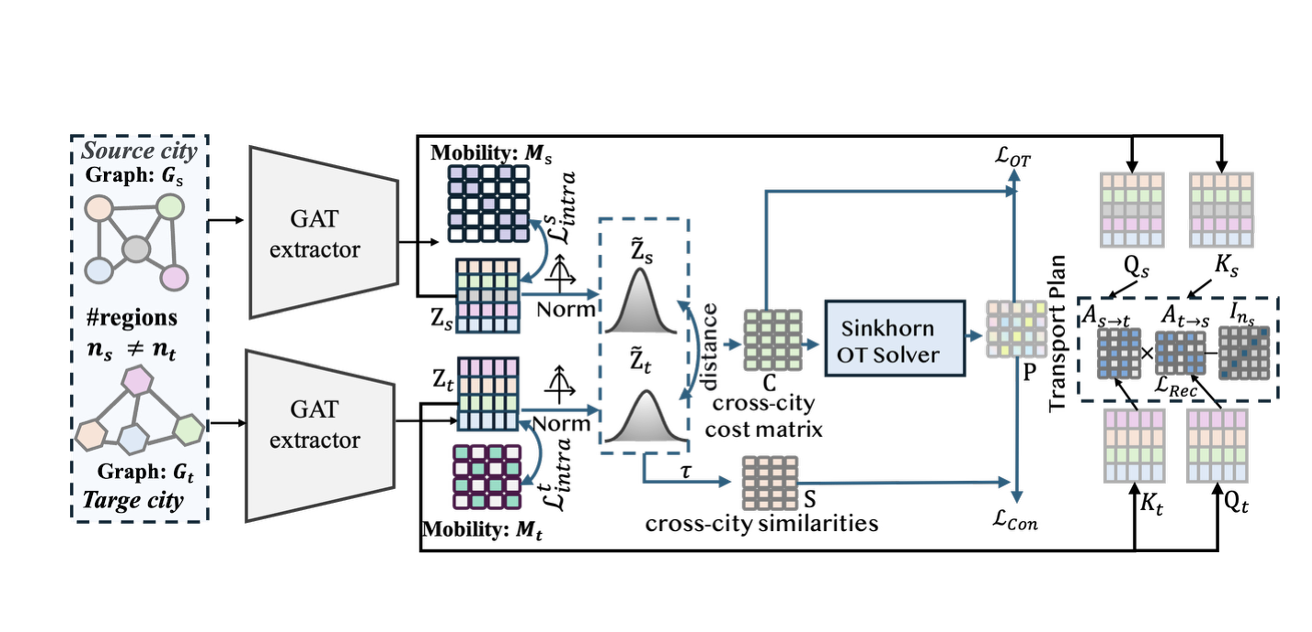

SCOT: Multi-Source Cross-City Transfer with Optimal-Transport Soft-Correspondence Objectives SCOT: Multi-Source Cross-City Transfer with Optimal-Transport Soft-Correspondence Objectives

|

Co-author

|

Network Perturbation Aggregation for Graphon Estimation Network Perturbation Aggregation for Graphon Estimation

|

|

Cross-Domain Hyperspectral Image Classification via Mamba-CNN and Knowledge Distillation Cross-Domain Hyperspectral Image Classification via Mamba-CNN and Knowledge Distillation

|

|

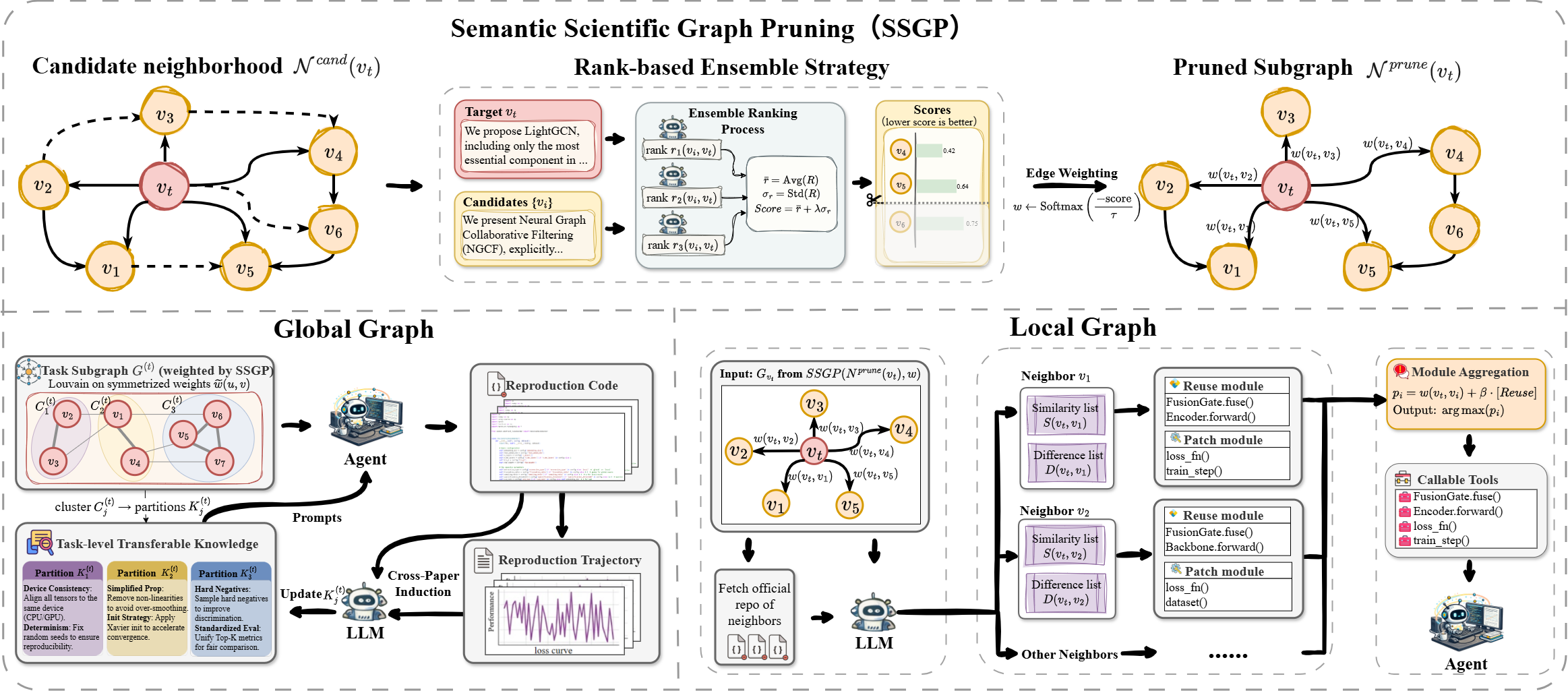

Semantic Scientific Graph Pruning for Reliable Agentic Paper Reproduction Semantic Scientific Graph Pruning for Reliable Agentic Paper Reproduction

|

🤖 LLM Engineering Projects

AlignDPO AlignDPO   DPO · IPO · KTO · QLoRA · Mistral-7B · HH-RLHF |

RAGAudit: Hallucination Detection RAGAudit: Hallucination Detection   BM25+FAISS · NLI · SelfCheckGPT · sem. entropy · Mistral-7B |

Congestion Pricing Analyzer Congestion Pricing Analyzer   TWFE · CS-DiD · Synth DiD · Double ML · 12M+ NYC TLC |

CausalLens: LLM-Augmented Causal Pipeline CausalLens: LLM-Augmented Causal Pipeline  DoWhy · Double ML · Causal Forest · Claude API · Streamlit |

GraphRAG: Multimodal RAG GraphRAG: Multimodal RAG  dense + entity graph + CLIP · FastAPI · ChromaDB |

Adaptive RAG Adaptive RAG  query routing · iterative retrieval · self-check · FastAPI |

DraftVerify: Speculative Decoding DraftVerify: Speculative Decoding  draft + verifier · latency · throughput · acceptance |

HQQ: 1-bit Quantization HQQ: 1-bit Quantization  1–8 bit · proximal opt · W1G64: 12.7× · >4× speedup |

Causal Promotion Optimization Causal Promotion Optimization   AIPW · LightGBM · DRLearner CATE · OR-Tools · FastAPI |

Demand Forecasting Demand Forecasting   Seasonal Naive · LightGBM · TFT · M5 · 28-day · store-SKU |

📖 Educations

-

2021.09 – Now: Ph.D. in Statistics, Boston University

-

2019.09 – 2020.05: M.A. in Statistics (Data Science Track), Columbia University

-

2018.05 – 2019.06: B.S. in Mathematics, Chinese Academy of Sciences (Jointly Supervised Talent Program)

-

2015.09 – 2019.06: B.S. in Mathematics, Shandong University

💻 Internships

Data Scientist Intern · Plymouth Rock Insurance

📍 Boston, MA · 🗓️ May 2025 – Aug 2025

-

Architected an end-to-end AWS SageMaker pipeline for property-level loss prediction using an XGBoost Tweedie model on multi-million-policy data, lifting Gini by +4.3% over the production baseline and directly improving underwriting risk segmentation.

-

Pioneered an LLM-powered visual risk scoring system combining GPT-4o multimodal reasoning with Google Street View imagery to capture previously unobservable property features (roof condition, surroundings, hazards); integrated outputs into downstream actuarial pricing models as a novel signal layer.

-

📎 For a high-level, non-confidential summary of this work, see the Home Insurance slides.

✨ My Apps

A quiet collection of cinematic, atmospheric, and emotionally resonant side projects — part digital keepsakes, part memory-keepers. See all →

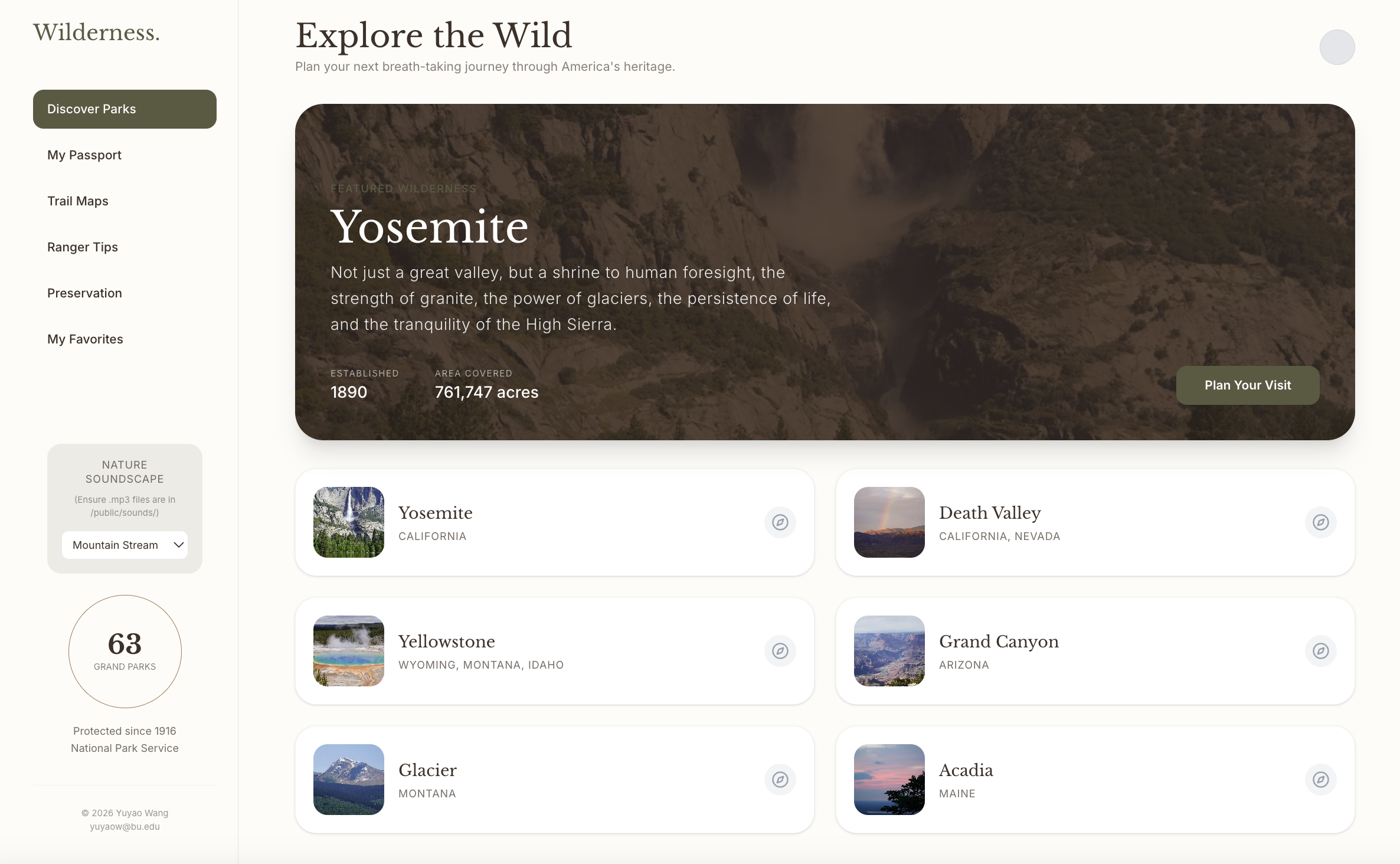

🌲 Wilderness |

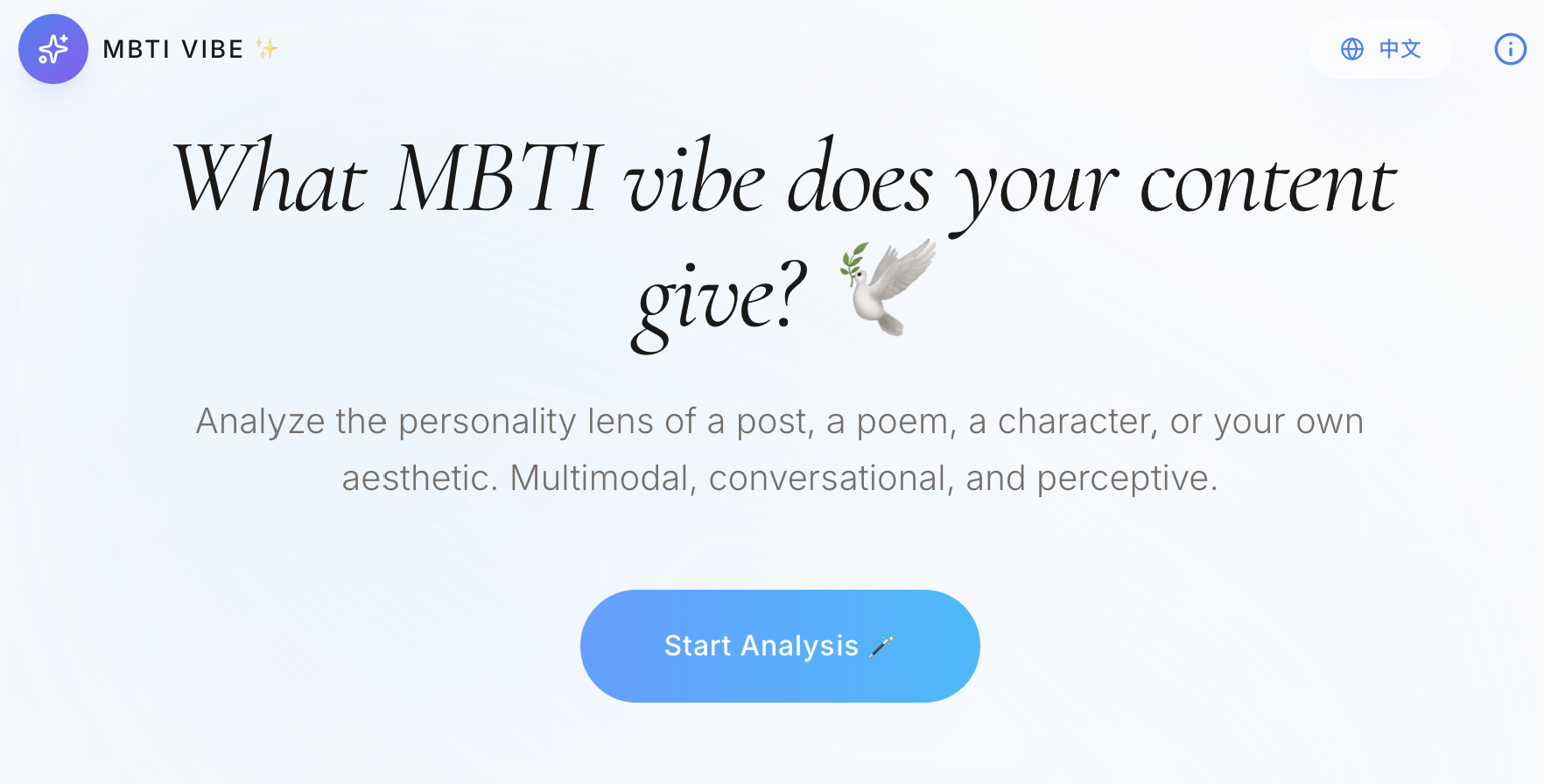

✨ MBTI Vibe |

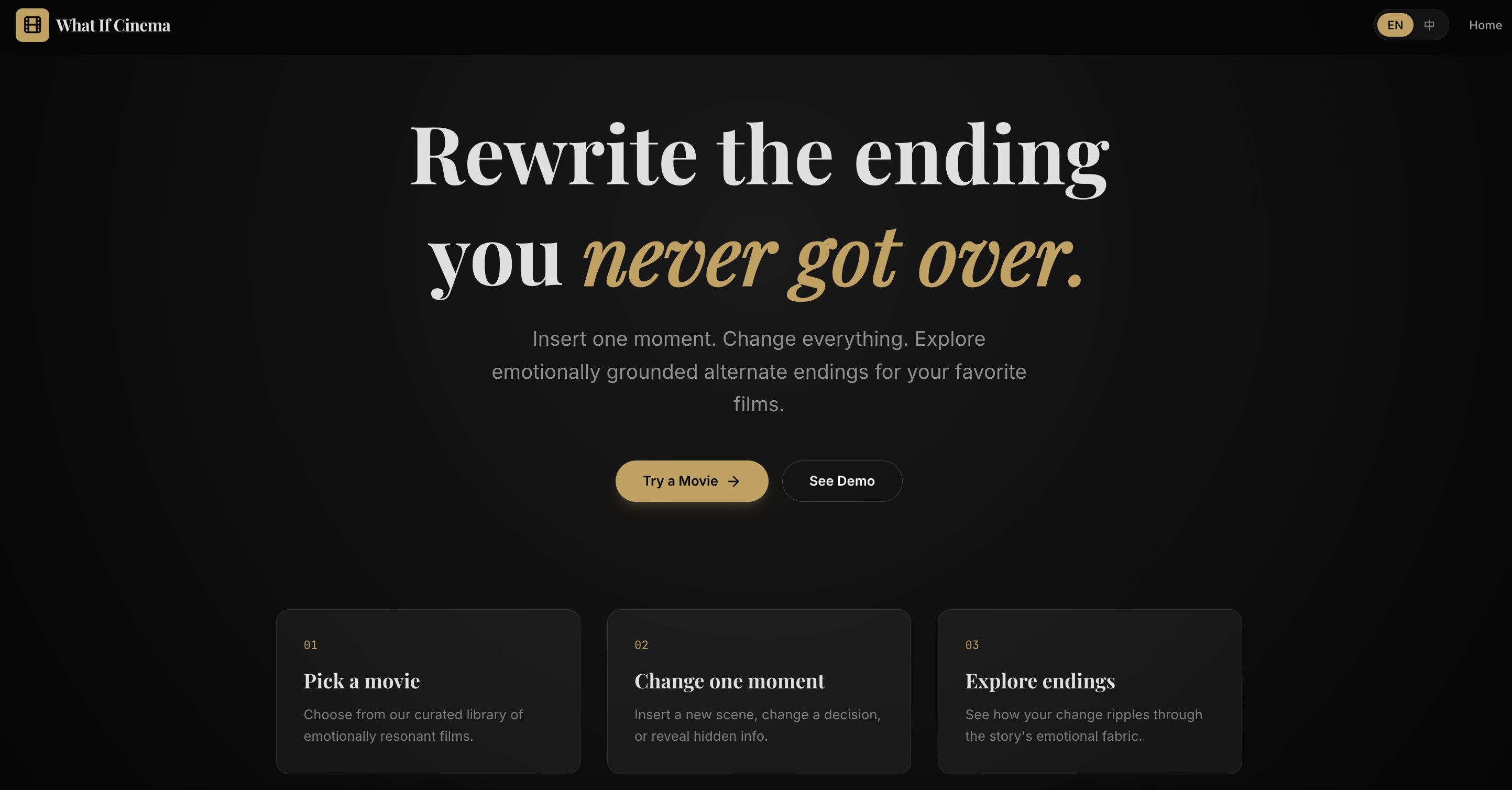

🎬 What If Cinema |

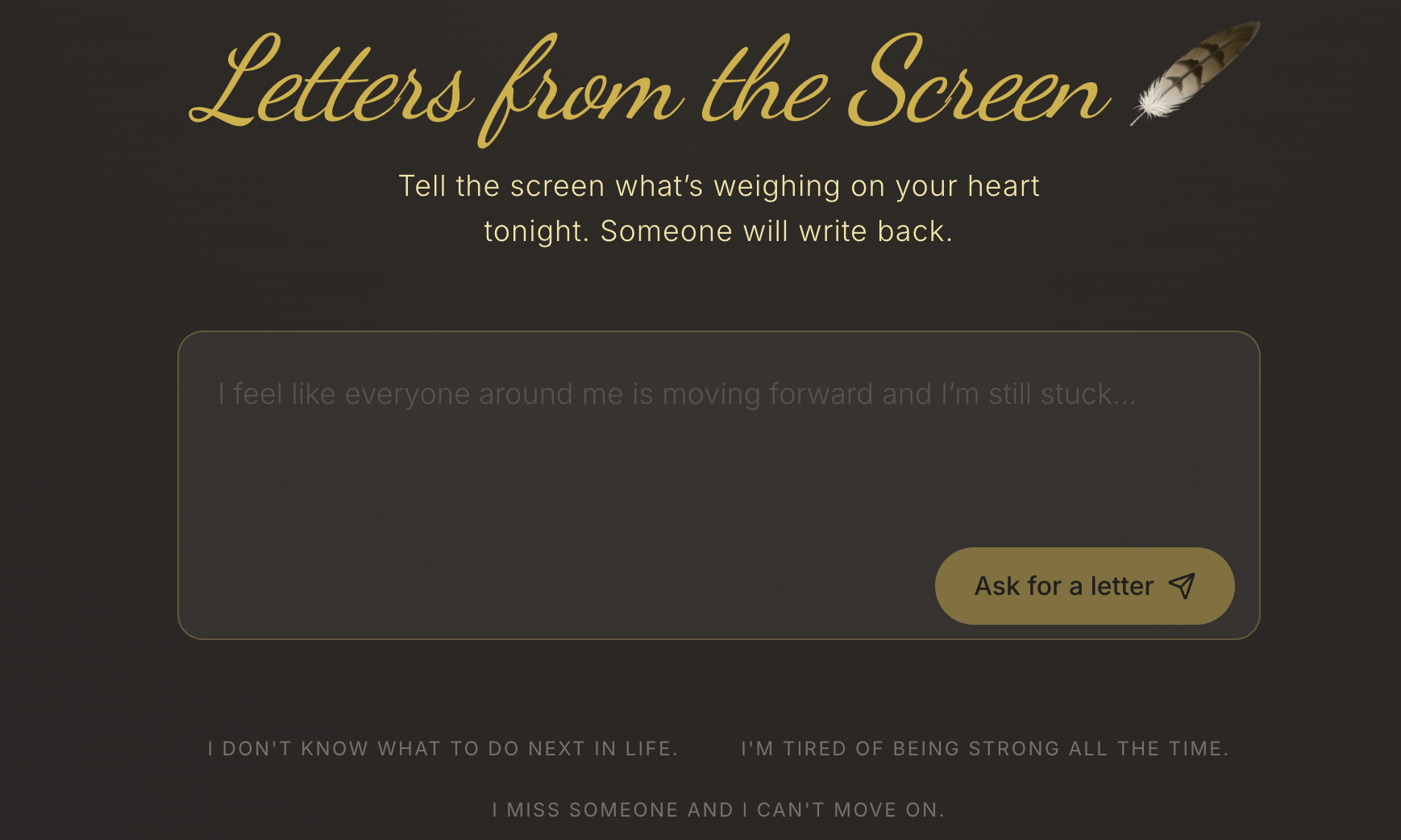

✉️ Letters from Screen |

✈️ If You Disappeared |

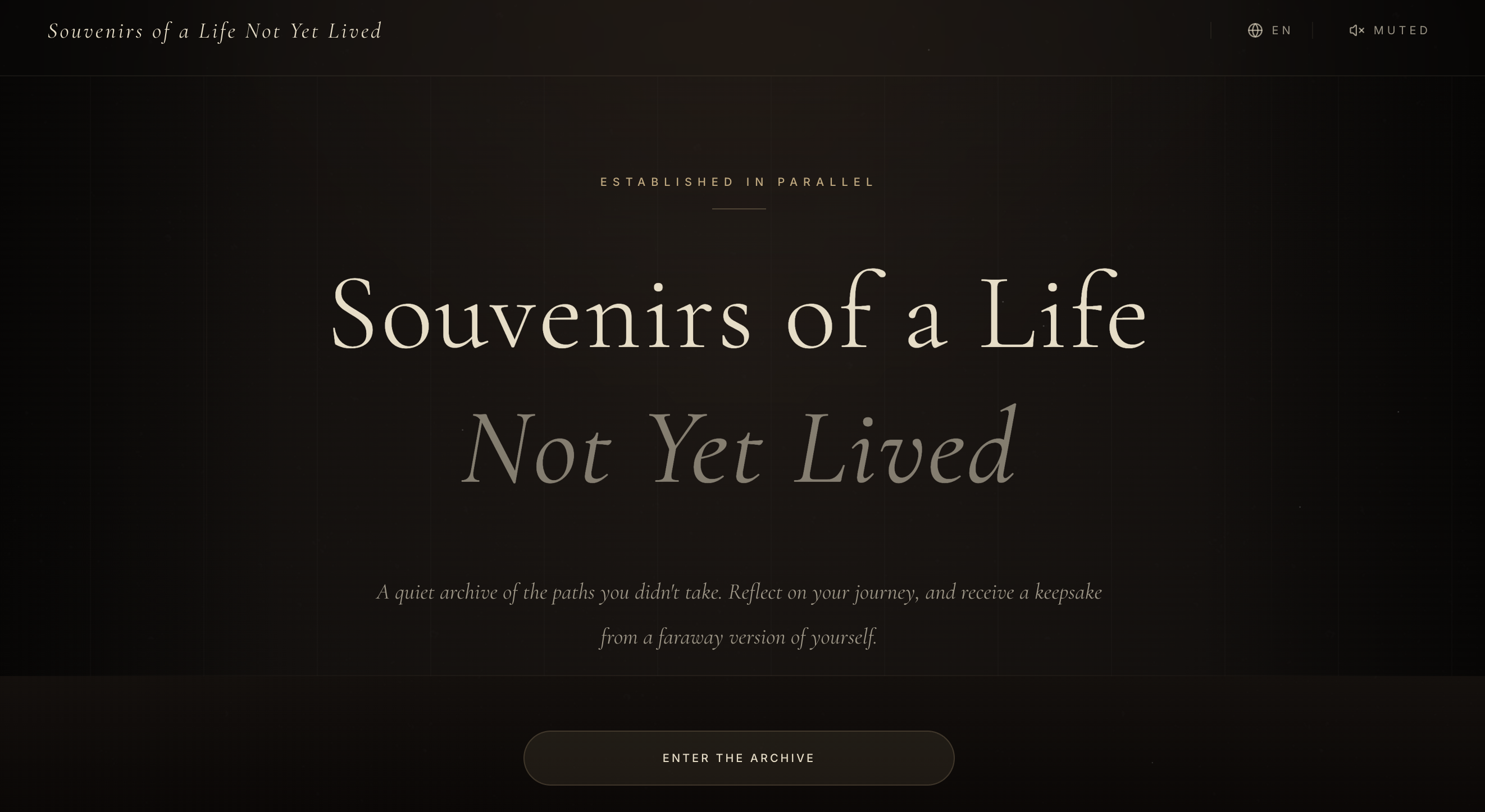

🎟️ Souvenirs |

🗺️ Map of Me |

🦋 A Room in Macondo |

✒️ Say It Like a Classic |

🏛️ Boston Archive |

🎖 Honors

- 2026: Dean’s Dissertation Fellowship, Boston University

- 2025: Student Travel Grant, Boston University

- 2025: Ralph B. D’Agostino Endowed Fellowship, Boston University

-

2025: Outstanding Teaching Fellow Award, Boston University

-

2019: Outstanding Graduate, Shandong University

- 2018: Hua Loo-Keng Scholarship, Chinese Academy of Sciences

- 2018: National Gold Award, Internet+ Innovation & Entrepreneurship Competition

- 2018: First-Class Scholarship, Shandong University

- 2018: Outstanding Student Leader, Shandong University

📂 DS Projects

📝 Service & Teaching

Presentations · CIKM 2024, NeurIPS 2025

Reviewer · CIKM 2025, ICME 2026, ICML 2026, KDD 2026

Instructor @ Boston University · MA 582 Mathematical Statistics, MA 113 Elementary Statistics

TA @ Boston University · MA 575 Generalized Linear Models, MA 582, MA 415 Data Science in R, MA 214 Applied Stats

🎨 Interests

🎵 Mandarin R&B loyalist — Leehom Wang, David Tao, Khalil Fong🦋, Dean Ting

🎹 Trained in piano, calligraphy, and ink painting

🏞️ National park lover · 🫧 lake admirer · 🌅 opacarophile — welcome to my Gallery

Dog Classification

Dog Classification

Financial Sentiment

Financial Sentiment  Airbnb Dashboard

Airbnb Dashboard  Bayesian Logistic

Bayesian Logistic  Time Series Forecast

Time Series Forecast